The term "hacker" once conjured images of shadowy figures breaking into systems under the cover of night. But in a world increasingly dependent on digital infrastructure, the line between good and bad hackers has blurred—and sometimes reversed.

Enter ethical hacking: the deliberate act of testing and probing networks, apps, and systems—not to break them for gain, but to find weaknesses before real criminals do. These professionals, often called “white hats,” are employed by companies, governments, and NGOs to protect digital ecosystems in a time when cyberattacks are not just common, but catastrophic.

As with all powerful tools, ethical hacking comes with serious ethical and legal dilemmas. Who gets to hack? Under what rules? And what happens when even good intentions go wrong?

🎯 What Is Ethical Hacking?

Ethical hackers use the same techniques as malicious actors—port scanning, social engineering, buffer overflow exploits—but they do so with explicit permission. Their job is to anticipate threats and shore up defenses, acting like digital vaccinators for an immune system that can’t afford to fail.

Activities include:

-

Penetration testing

-

Vulnerability assessments

-

Red team vs blue team simulations

-

Social engineering scenario drills

-

Bug bounty program participation

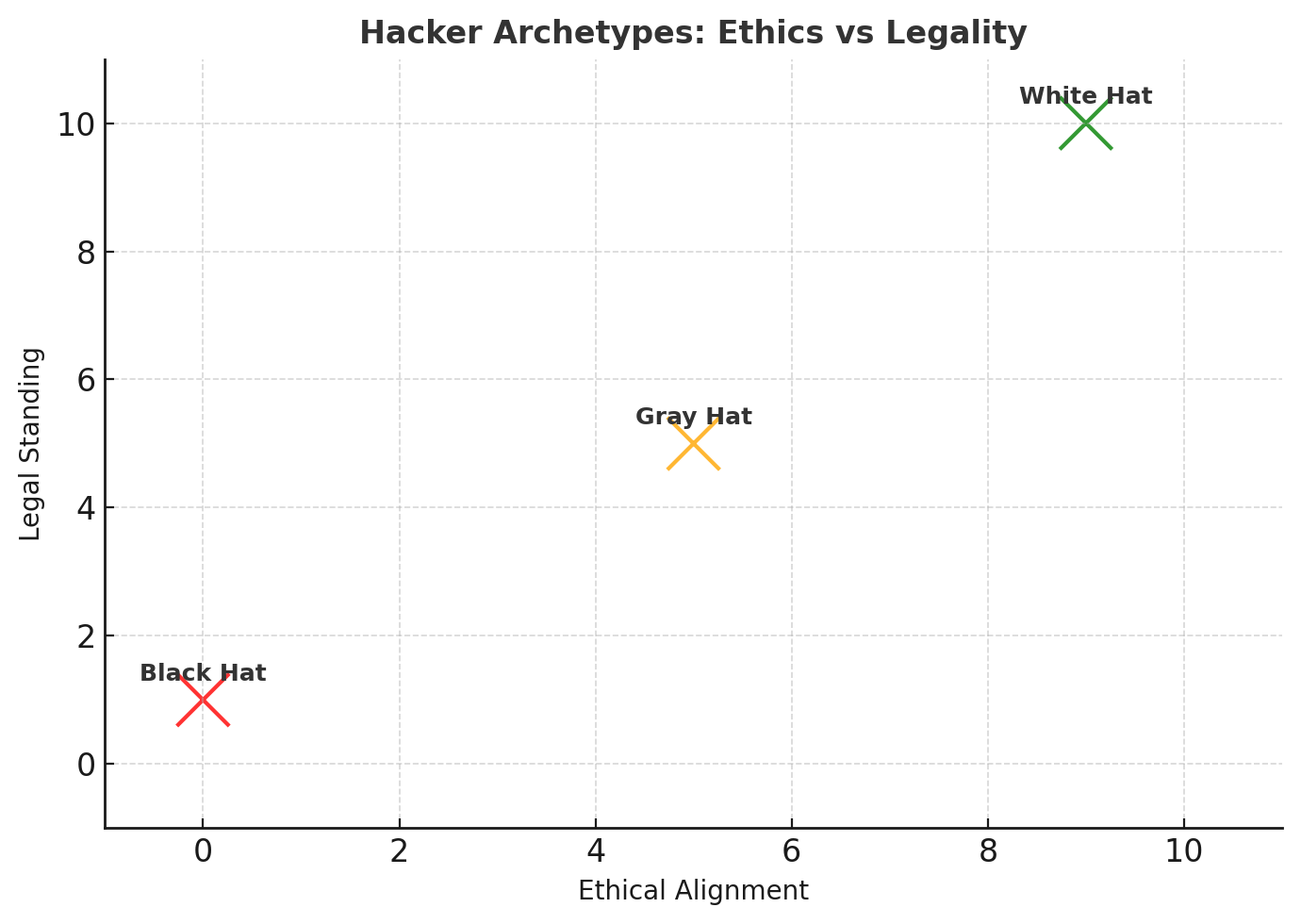

But not all hacking exists in neatly defined ethical spaces.

⚖️ The Ethical Dilemmas of “Good Hacking”

🧠 Consent vs. Impact

-

Is it still ethical to expose a critical vulnerability if the organization hasn’t given consent?

-

What if publicizing it forces a fix that otherwise wouldn’t happen?

💣 Weaponized Disclosure

-

When is it right to go public with a zero-day exploit?

-

Some argue public pressure accelerates security. Others say it exposes users to harm.

🏛️ Legal Gray Zones

Even ethical hackers face:

-

CFAA charges (Computer Fraud and Abuse Act in the U.S.)

-

Potential civil lawsuits from companies not willing to admit flaws

-

Retaliation when exposing government or political vulnerabilities

🧪 Real-World Examples

-

🏥 2021: A Dutch ethical hacker accessed Donald Trump’s Twitter account using “maga2020!” as the password—without malicious intent. Despite his warning, the act was technically illegal.

-

🛒 2020: Shopify quietly rewarded a white hat for discovering a flaw that could expose millions of transactions. The hacker received no public credit, raising questions about transparency and recognition.

-

🔐 Bug bounty programs like HackerOne and Bugcrowd offer legal, paid pathways—but what happens when companies reject valid vulnerabilities?

🌐 Ethical Frameworks Emerging

Efforts to formalize ethical hacking include:

-

Codes of conduct from OWASP and EC-Council

-

Disclosure protocols like coordinated vulnerability disclosure (CVD)

-

Safe harbor clauses in bug bounty terms

-

Global charters, including EU-based ethics councils for cyber research

Still, global legal alignment is far from reality. What's ethical in Sweden may be criminal in Texas.

🔮 Looking Ahead

Expect ethical hacking to become:

-

Standard practice in cybersecurity audits

-

A required role in AI system oversight and bias testing

-

More tightly governed by regional laws and licensing bodies

AI-generated code, edge computing, and quantum threats will all demand next-gen ethical hackers—with tools more powerful than ever before.

🧾 Conclusion: Hacking With Honor

Ethical hacking is not just about technical skill—it’s about judgment, context, and accountability. In a world where the next breach could crash a hospital, rig an election, or upend financial systems, white hats play a role as critical as any emergency responder.

But as with all hero myths, reality is complicated. Good intentions don’t always guarantee good outcomes. That’s why ethical hacking isn’t just a job—it’s a philosophy. One that needs rules, reflection, and relentless scrutiny.

🆕 Latest Updates on Ethical Hacking (September 2025)

-

Bug bounty economy booming. HackerOne reported total payouts surpassing $300 million to security researchers, with average rewards for critical vulnerabilities up 25% compared to 2023.

-

Disputed reports on the rise. More cases are surfacing where companies dismiss vulnerabilities as “low impact,” only to face real-world exploits later. This has fueled debates about transparency and fairness in disclosure programs.

-

Government involvement. The EU is discussing a unified “white hat license”, giving researchers legal protection when acting in good faith. Meanwhile, in the U.S., the CFAA (Computer Fraud and Abuse Act) is still being used against researchers in certain cases, creating legal uncertainty.

-

AI under the microscope. New bug bounty programs now include prompt injection attacks on large language models (LLMs). Ethical hackers are increasingly testing AI systems, which are quickly becoming high-value targets.

Honestly, I see this as both progress and warning. On one hand, higher payouts and wider recognition prove that ethical hackers are finally being valued as a frontline defense in cybersecurity. On the other hand, the lack of fairness in how some companies handle disclosures undermines trust and pushes researchers toward the gray zone.

I strongly believe the world needs a global “safe harbor” framework for ethical hackers — a system where good-faith security research is always protected, regardless of jurisdiction. Right now, what counts as ethical in Europe may still be criminal in the U.S., and that inconsistency is dangerous.

The AI angle is even more pressing. In my view, within the next 2–3 years, AI vulnerability testing will become its own discipline. If we don’t establish clear norms soon, we may find ourselves in an arms race — not against servers and networks, but against algorithms that already control healthcare, finance, and transportation.

👉 Bottom line: ethical hacking remains a paradox. Without it, we’re exposed; with it, we’re still stuck in legal and ethical limbo. I believe the future of this profession will depend less on technical tools — and more on whether we solve its legal and moral contradictions.